-

Full Conference Pass (FC)

Full Conference Pass (FC)

-

Full Conference One-Day Pass (1D)

Full Conference One-Day Pass (1D)

-

Basic Conference Pass (BC)

Basic Conference Pass (BC)

-

Student One-Day Pass (SP)

Student One-Day Pass (SP)

-

Experience Pass (EP)

Experience Pass (EP)

-

Exhibitor Pass (EP)

Exhibitor Pass (EP)

-

Electronic Theater Ticket (ET)

Electronic Theater Ticket (ET)

-

Reception Ticket (RT)

Reception Ticket (RT)

Join the international SIGGRAPH Asia community for festive and adventurous events that illuminate the past, present, and future of computer graphics and interactive techniques.

Exploring Red Planets and Metal Worlds: How JPL turns Dreams into Reality [Includes Opening & Awards Ceremony]

Date: Wednesday, December 5th

Time: 11:00am - 12:45pm

Summary: From the Curiosity rover on Mars to the Cassini orbiter at Saturn, NASA’s Jet Propulsion Laboratory has a long history creating, building and flying missions that explore our solar system. In January 2017, Psyche, a mission led by Arizona State University (ASU) and managed by JPL, was selected as the latest addition to NASA’s Discovery program. Psyche will explore a unique body in our solar system: a 200 km diameter world made almost entirely of metal. David Oh, former lead flight director for the Curiosity Rover and Systems Engineering Manager for the Psyche mission, will describe how JPL works with teams of scientists and engineers to create these unique and innovative space missions, taking wild ideas from initial conception through development to the decision to fund for flight. David will also describe how JPL encourages collaboration and innovation, creating the conditions where diverse teams can excel at technically difficult tasks and succeed in a risk adverse environment.

Beyond the Uncanny Valley: Creating Realistic Virtual Humans in the 21st Century

Date: Wednesday, December 5th

Time: 2:15pm - 4:00pm

Summary: Bridging the Uncanny Valley was once the Holy Grail for every roboticist, computer graphics, animation and video games professional. This panel gathers acclaimed professionals from those fields for an exploration of the State of the Art in creating realistic Virtual Humans. Does the concept of Uncanny valley still stand in 2018? What are the next steps and where do research efforts need to be directed? The discussion will be a great opportunity to find out the devices used by each discipline for achieving a greater suspension of disbelief.

Beyond Human Vision: The Future of 4K and 8K

Date: Wednesday, December 5th

Time: 4:15pm - 6:00pm

Summary: As the launch of 4K/8K satellite broadcasting in Japan starting from December 1, 2018, 4K/8K technology and content creation has faced a new era. The application area of 4K/8K is getting widely spread, not only for broadcasting but for CG creation, interactive application, public viewing, medical application, and so on.

In this session, the speakers will have presentations as well as demonstrations regarding: 1) key technologies of 4K/8K production/broadcasting such as image acquisition, display and video coding, 2) 4K/8K content creation examples from broadcasters, CG creators and underwater cameraperson.

For this session, an 8K projector will be provided at the venue to demonstrate 8K contents.

Computer Animation Festival - Electronic Theater - PreShow: AI DJ Project - A dialog between human and AI through music

Date: Wednesday, December 5th

Time: 4:15pm - 5:00pm

Summary: “AI DJ Project” is a live performance featuring an Artificial Intelligence (AI) DJ playing alongside a human DJ. Utilizing various deep neural networks, the software(AI DJ) selects vinyl records and mixes songs. Playing alternately, each DJ selects one song at a time, embodying a dialogue between the human and AI through music. DJ-ing “Back to Back” serves as a critical investigation into the unique relationship between humans and machines.

**To be able to attend the Opening Pre-show of Computer Animation Festival, you will have to purchase the Wednesday, 05 December 2018, 5.00PM session Ticket. The Opening Pre-show will commence at 4.15PM followed by the 5.00PM Electronic Theater session. You can still attend the Electronic Theater if you cannot make it for the Opening Pre-show.

SIGGRAPH Asia 2018 Reception

Date: Wednesday, December 5th

Time: 7:30pm - 9:30pm

Summary: We invite you to attend the official SIGGRAPH Asia 2018 Reception. Mingle with the SIGGRAPH Asia 2018 community. Greet old friends, share a toast with colleagues, and meet the thinkers from Asia and around the world who are shaping the future of computer graphics and interactive techniques. The location of the venue is reachable by train. Additional and/or stand-alone tickets can be purchased online and on-site. Tickets are limited and it is recommended to purchase them online.

Cinematography of Incredibles 2 - Function and Style

Date: Thursday, December 6th

Time: 9:30am - 10:30am

Summary: Exploring the visual language of i2, we will cover influences and motivations both new and familiar to this sequel. Together we will explore visual concepts, and then talk in detail about our methods for realizing those designs to achieve our cinematic goals.

Computational Origami: from Science to Sculpture

Date: Thursday, December 6th

Time: 11:45am - 12:45pm

Summary: I like to blur the lines between art and mathematics, by freely moving from designing sculpture to proving theorems and back again. Paper folding is a great setting for this approach, as it mixes a rich geometric structure with a beautiful art form. Mathematically, we are continually developing algorithms to fold paper into any shape you desire; with Tomohiro Tachi, our new Origamizer algorithm enables efficient watertight folding of any polyhedral surface, such as the classic Stanford bunny or Utah teapot.

Sculpturally, we have been exploring curved creases, which remain poorly understood mathematically, but have potential applications in robotics, deployable structures, manufacturing, and self-assembly. By integrating science and art, we constantly find new inspirations, problems, and ideas:

proving that sculptures do or don't exist, or illustrating mathematical beauty through physical beauty. Collaboration, particularly with my father Martin Demaine, has been a powerful way for us to bridge these fields.

Lately we are exploring how folding changes with other materials, such as hot glass, opening a new approach to glass blowing, and finding new ways for paper and glass to interact.

From Gollum to Thanos: Characters at Weta Digital

Date: Thursday, December 6th

Time: 5:00pm - 6:00pm

Summary: To be updated

Behind the scenes of Solo - A Star Wars Story

Date: Friday, December 7th

Time: 9:00am - 10:45am

Summary: To be Updated

New Generation Household Robot’s Concept [Includes Closing of SA18 & Opening of SA19 Ceremony]

Date: Friday, December 7th

Time: 11:00am - 12:45pm

Summary: To be updated

MR360 Live: Immersive Mixed Reality with Live 360° Video

Date: Friday, December 7th

Time: 4:00pm - 4:15pm

Summary: DreamFlux presents MR360 Live, a new way to create immersive and interactive Mixed

Reality applications. It blends 3D virtual objects into live streamed 360 videos in real-time,

providing the illusion of interacting with objects in the video.

From a standard 360° video, we automatically extract important lighting details, illuminate

virtual objects, and realistically composite them into the video. Our MR360 toolkit runs in

real-time and is integrated into game engines, enabling content creators to conveniently

build interactive mixed reality applications.

We demonstrate applications for augmented teleportation using 360° videos. This

application allows VR users to travel to different 360° videos. The user can add and

interact with digital objects in the video to create their own augmented/mixed reality

world. Using a live streaming 360° camera, we will travel to and augment the Real-Time

Live! stage in front of a live audience.

An Architecture for Immersive Interactions with an Emotional Character AI in VR

Date: Friday, December 7th

Time: 4:15pm - 4:30pm

Summary: When entering a virtual world, the users expect an experience that feels natural. Huge progress has been made with regards to motion, vision, physical interactivity, whereas interactivity with non-playable character stays behind. This live-demo introduces a method that leads to more aware, expressive and lively agents that can answer their own needs and interact with the player. Notably, the live-demo covers the use of an emotional component and the addition of a layer of communication (speech) to allow more immersive and interactive AIs in VR.

Live Replay Movie Creation of Gran Turismo

Date: Friday, December 7th

Time: 4:30pm - 4:45pm

Summary: We perform live replay movie creation using the recorded play data of Gran Turismo. Our real-time technologies enable movie editing such as authoring camerawork and adding visual effects while reproducing the race scene with high quality graphics from the play data. We also demonstrate some recent development for the future.

Pinscreen Avatars in your Pocket: Mobile paGAN engine and Personalized Gaming

Date: Friday, December 7th

Time: 4:45pm - 5:00pm

Summary: We will demonstrate how a lifelike 3D avatar can be instantly built from a single selfie input image using our own team members as well as a volunteer from the audience. We will showcase some additional 3D avatars built from internet photographs, and highlight the underlying technology such as our light-weight real-time facial tracking system. Then we will show how our automated rigging system enables facial performance capture as well as full body integration. We will showcase different body customization features and other digital assets, and show various immersive applications such as 3D selfie themes, multi-player games, all running on an iPhone.

“REALITY: Be yourself you want to be” VTuber and presence technologies in live entertainment which can make interact between smartphone and virtual live characters

Date: Friday, December 7th

Time: 5:00pm - 5:15pm

Summary: In this presentation we proposes and demonstrate the “REALITY" platform and associated software and hardware components to answer needs in the live entertainment sector and the Virtual Youtuber space. We demonstrate how off the shelf software and hardware components can be combined together to realize a dream in the animation of interactive virtual characters. This presentation is a collaboration between GREE, Inc., Wright Flyer Live Entertainment, Inc. (WFLE), IKINEMA and StretchSense.

"REALITY" is a platform for VTubers and in this presentation we promote the concept of "Be yourself you want to be" with associated services like live entertainment broadcasting service and smartphone applications.

The animation industry in Japan is mature, with numerous animation titles released and with a vast number of animation fans. The fans' way of enjoying is not limited to just watching animation and purchasing related goods, many people have the desire to become a "virtual hero" at events or at different platforms in Japan. Virtual character culture, VOCALOID, contents such as videos and live streaming are already present, and we believe that the virtual talent in 2D or 3D avatar is now easily accepted. Furthermore, we demonstrate that the concept can be now achieved with readily available and affordable solutions and setups from home.

In addition, unlike animation, interactive bi-directional communication is possible as virtual talent responds to comments sent by audiences during live streaming and in turn Twitter accounts are updated frequently to respond to this. Fans feel fully engaged and fully interactive with their virtual idols.

Interactive virtual characters that can be setup together by current consumer VR technologies, allow one to express much more openly via a virtual avatar without revealing appearances. The proposed REALITY platform allows easy creation and animation of different avatars and a opportunity for one to have multiple 3D avatar to represent personality and represent themselves in the virtual space. This clearly makes this new opportunity very appealing for a large audience.

This presentation demonstrates the full concept and brings the features of the REALITY platform for VTubers and interactive virtual characters in general.

More Real, Less Time: Mimic's Quest in Real-Time Facial Animation

Date: Friday, December 7th

Time: 5:15pm - 5:30pm

Summary: Mimic Productions' CEO, Hermione Mitford, will present a live-stream demonstration of detailed facial animation in real-time, utilizing her photo-real 3D digital-double. The presentation will include a speech from Mitford (and her avatar) addressing Mimic's technological approach, as well as the corresponding applications for the technology. A specific focus will be placed on realism and the details of the human face.

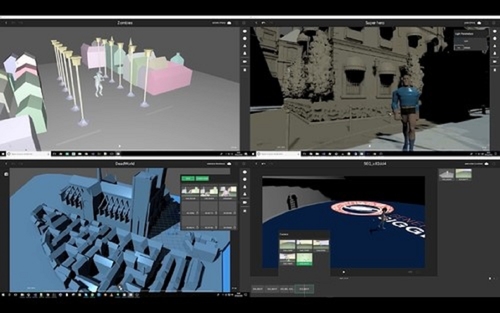

The Power of Real-Time Collaborative Filmmaking 2

Date: Friday, December 7th

Time: 5:30pm - 5:45pm

Summary: We propose two people on stage with two more persons located in Paris. We will then demonstrate remote work with real-time feedback. Each of us will be responsible for creating a short sequence. The goal is to show that with a real-time unified collaborative workflow (CUP® workflow) we can produce a rather complex movie in just a few minutes of work. We will demonstrate three key features of our solution on stage live. First the real-time collaborative editing and unified workflow: we create a 3D animation movie in a single application and modify each other’s work with instant feedback. Then we will load the created scenes on a smartphone that wewill be used as a virtual camera (with the help of augmented reality). Finally, we will show a version of the movie rendered with RTX technology live in real-time on the server.

Real-time character animation of BanaCAST (BANDAI NAMCO Studios Inc.)

Date: Friday, December 7th

Time: 5:45pm - 6:00pm

Summary: High quality real-time CG character animation by BANDAI NAMCO Studios Inc. combining latest motion capture technology and game engine

Technical Papers Fast Forward

Date: Tuesday, December 4th

Time: 6:00pm - 8:00pm

Summary: An entertaining, illuminating summary of SIGGRAPH Asia's 2018 Technical Papers in an exciting two-hour session! The author(s) of each paper are allowed a little less than a minute to wow the crowd with their results and entice attendees to hear their complete paper presentation later in the week.